Activity 3: Pose Recognition

Train and Test the Pose Classifier

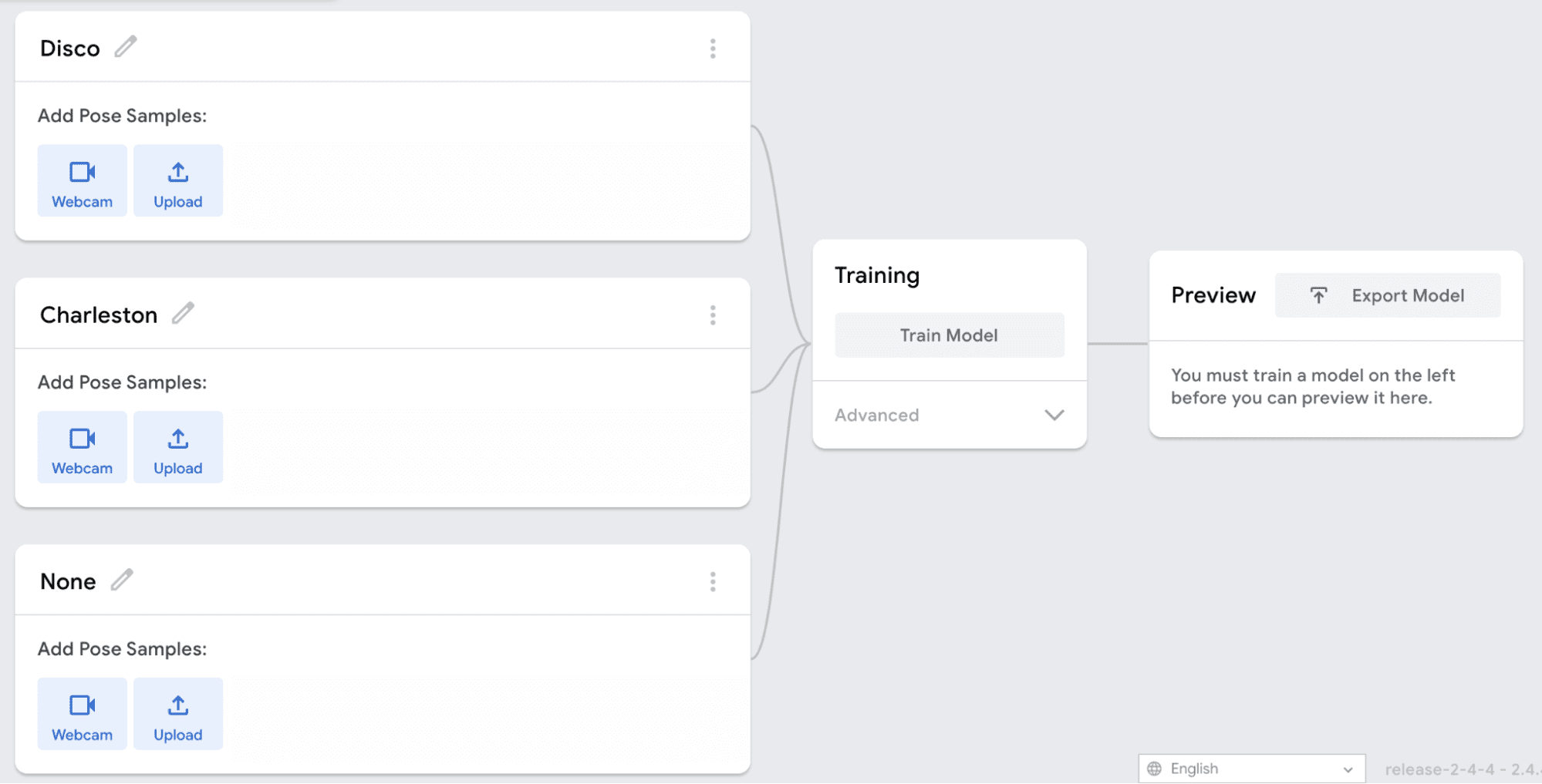

The first step is to open Google Teachable Machine in order to create a pose recognition model.

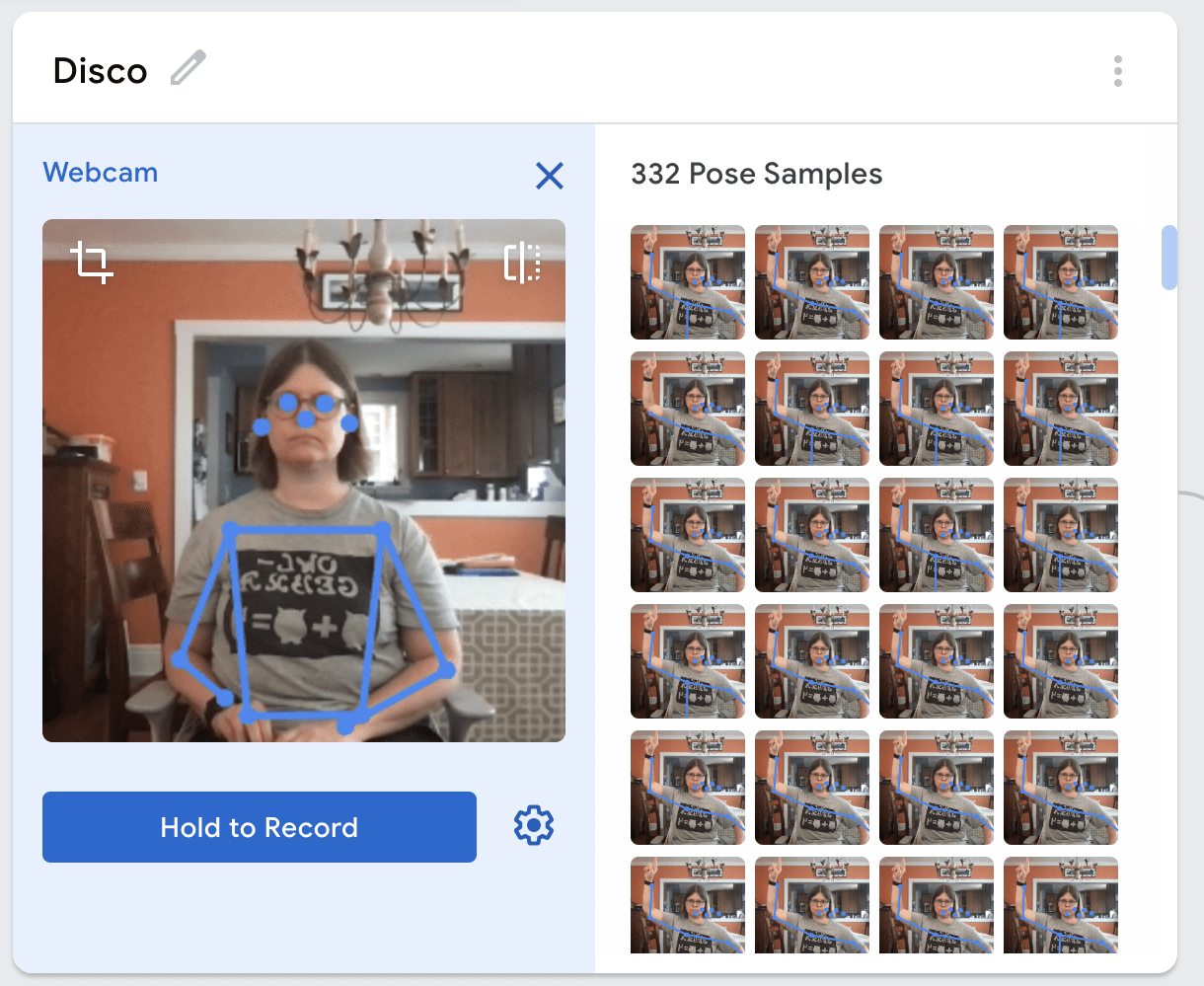

Next, get ready to record your poses. Make sure that you are centered in the webcam view and that you are far enough away from the webcam to see blue lines appear on your trunk and arms. This means that the Teachable Machine is tracking your skeleton. You will need to remain at about this distance from the camera when using your model, so you may want to mark the position with masking tape.

Add samples for each of your classes. You will want to add 200-300 samples for each class. Because you must be some distance away from the camera, you may need a partner to help you take the samples.

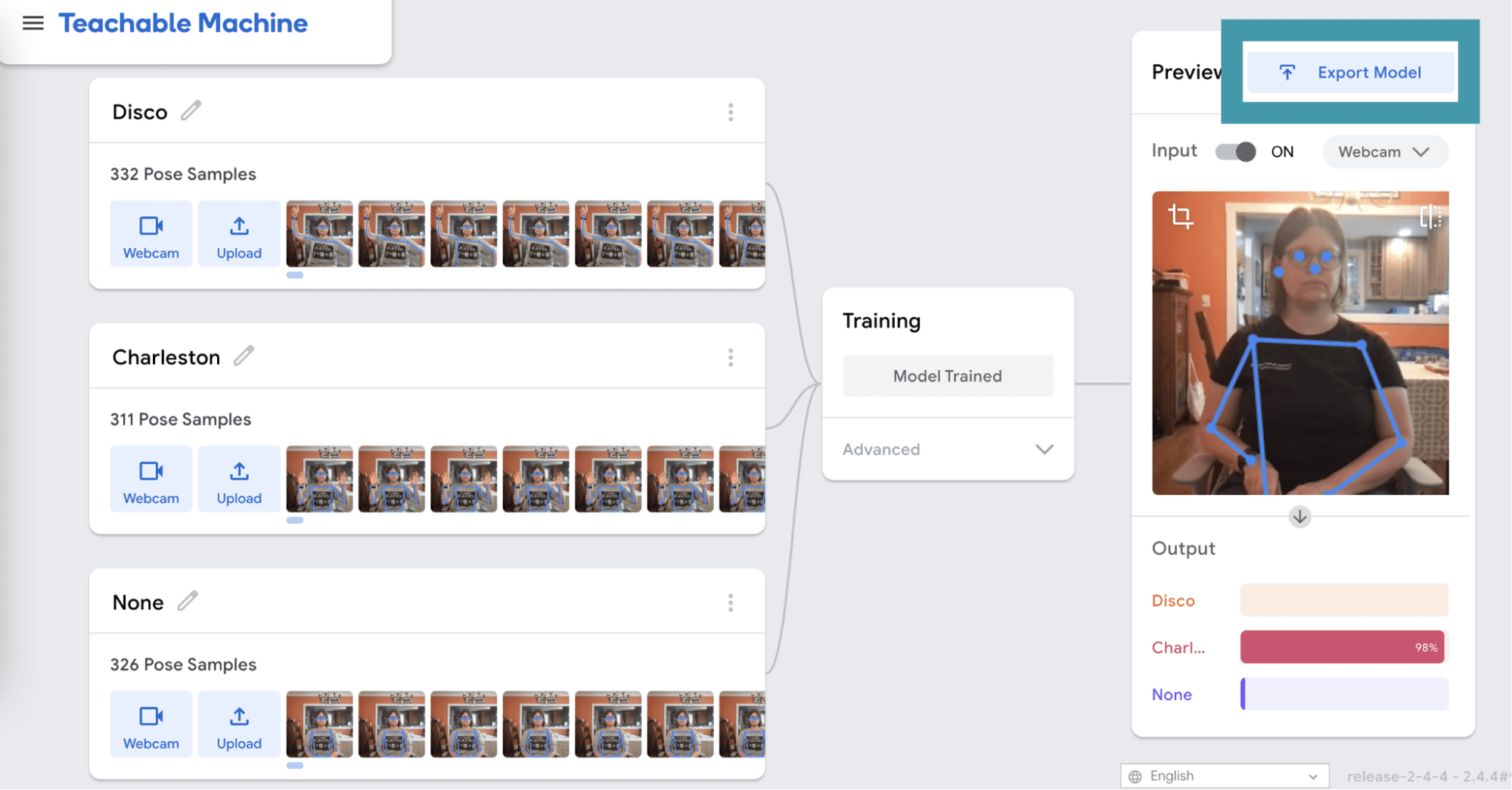

Next, train the model. You may notice that this takes longer than training the image and audio classifiers. Make sure to leave the teachable machine tab open while the model is training, even if your browser pops up a warning that the window is unresponsive.

Keep all of the defaults as they are, and click Upload my model. After your model has uploaded, copy your sharable link. You will need this link to create a Snap! project with your model. Remember to save your model in case you want to reference or change it later.

Using the Pose Classifier in Snap!

Using the pose classifier in Snap! is very similar to using the image or audio classifiers. If you are using the BlueBird Connector, open this project in Snap! and save a copy for yourself. Then click on the Settings menu and enable JavaScript extensions.

If you are using snap.birdbraintechnologies.com, import this project into Snap!.

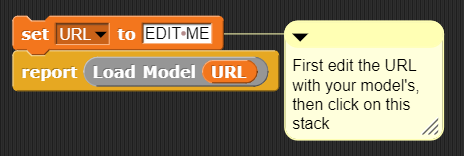

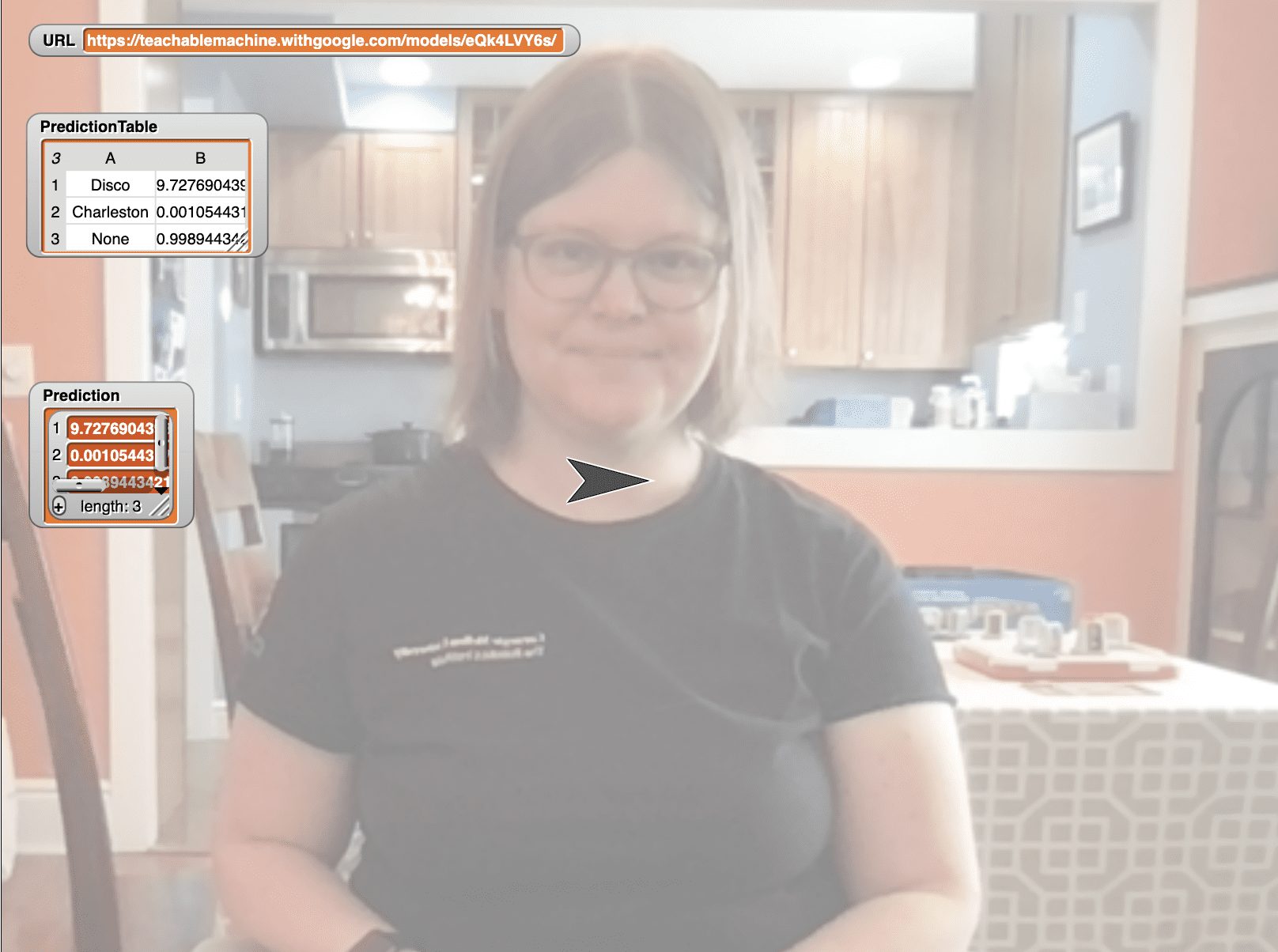

Modify the top script to set the URL variable to the link for your classifier. Then click on this stack of blocks to run the top script. You will only need to run this script once to load the libraries and the model. If the URL was correct and the model loaded correctly, you will see a message that reads “Model loaded successfully”. If this did not happen, check that the URL is correct and try clicking the stack again.

Challenge: Write a program to make the Finch respond to each of your poses. As you test your program, investigate what happens as you change your position in the camera view or move closer or further from the camera.